Introduction

ClearerThinking has a tool called ‘Calibrate Your Judgement’. In the tool, they provide points to help you judge how well you are doing. I personally found the points unhelpful, so just ignored it. However, I recently saw this paper by the creator of the tool, explaining the careful and detailed thought that went into creating the points system. Given this, I thought it would be useful for me to explain why I think the points are not good.

Context

The two main aims of the Calibrate Your Judgement tool are to:

Encourage you to think probabilistically. Instead of saying ‘I think the answer to this question is Z’, you say ‘I think there is 50% chance the answer is in the range [X, Y]’

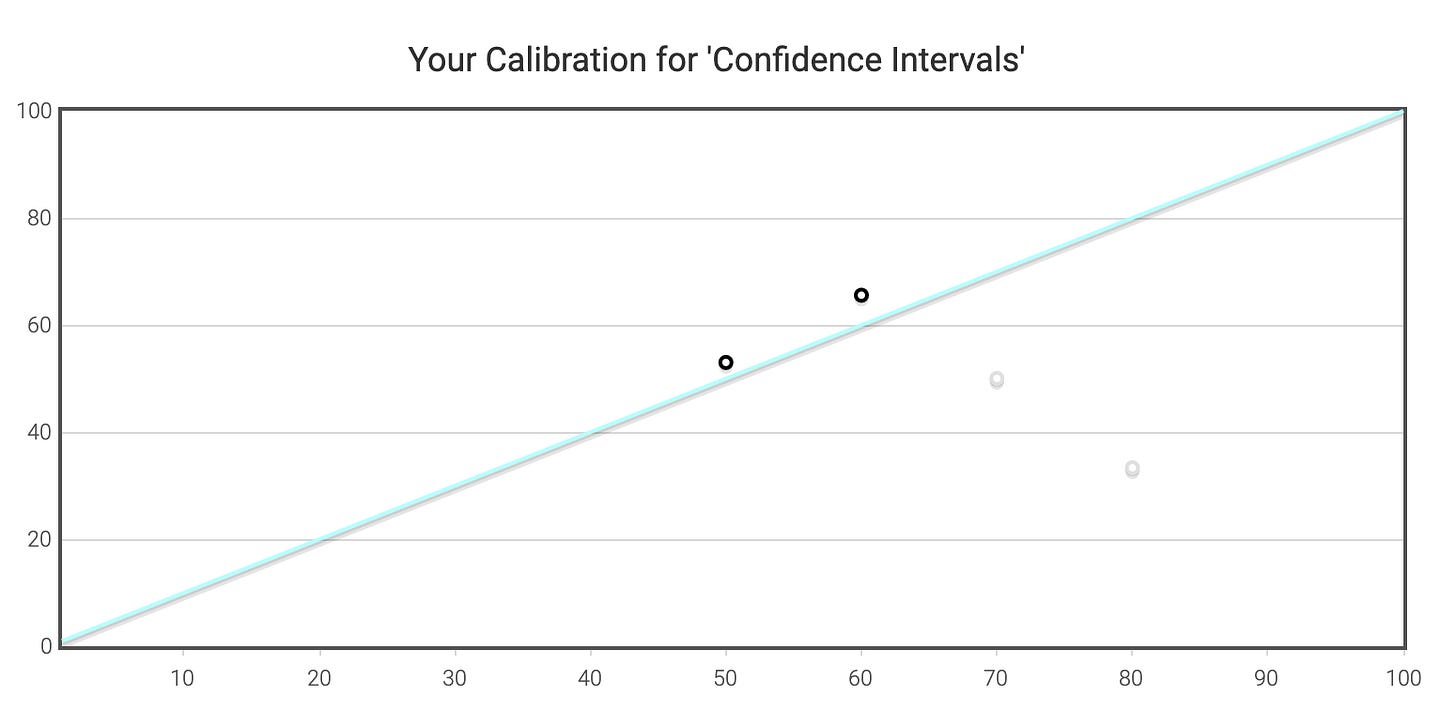

Help you calibrate your probabilities. If you make a large number of predictions of the form ‘There is a 50% chance of the answer being in [X, Y]’, then roughly half of those predictions should turn out to be correct.

I *highly* recommend the tool. Just spending a few minutes each day for a few weeks will greatly improve your probabilistic thinking. For me, the main thing I learnt was how large 90% confidence really is.

The Points

To help you judge answers, after each question, you are given some points along with some textual feedback. Here are some examples:

Based on these examples, you learn the following about the scores:

If the answer is in your interval, you get positive points. If the answer is outside your interval, you get negative points.

The smaller your interval (and hence, the more confident you are), the larger the magnitude of the points.

The further the answer is from your interval, the worse the punishment is.

They also provide summary statistics of the points:

My thoughts on the points

Here are the reasons I think the points are not good:

It rewards factual knowledge, rather than one’s accurate judgement of their confidence. You get the highest points and best compliments (with three exclamation marks!) only when you know the exact answer. Furthermore and consequently, it punishes ignorance.

If you are loss averse (like most people are) and hence want to avoid negative points, you are incentivised to make your intervals larger. Alternatively, if you are a risk-taker, then you are incentivised to make your intervals smaller.

It is not clear if the points are correlated with the goal of being well calibrated. Am I trying to maximise the score or aim for a total of zero?

I do not know how to interpet the average points per question. Is 2 out of 10 good? Bad? Normal?

The thing which I think is good about the points system is it strongly punishes incorrect over-confidence.

Potential alternatives

I assume the creator has already thought of these, but just in case here are the alternatives I can think of:

Remove the points system altogether.

Have points correspond to what would happen if you were making bets. Then if you are well calibrated, your net gains should be zero.

The main issue with the second option is that it is independent of the size of the interval, which does not feel ideal. On the other hand, being independent of the size of the interval means that the points are independent of the person’s knowledge, which is good.

The main benefit of the first option is that you can focus on what is important, which is being well-calibrated. And the chart provided seems to be the best way to visualse that:

Update. I had a conversation with the creator of the tool, Spencer Greenberg. Here is a summary of that conversation and my thoughts after it. https://lovkush.substack.com/p/an-anecdotal-critique-of-clearerthinkings-f87